Executive Summary

Clawdbot, the rapidly-adopted open-source AI agent gateway, has a significant exposure problem. Our research using Shodan and Censys identified over 1,100 publicly accessible Clawdbot gateway and control instances on the internet. While many deployments have authentication enabled, we discovered numerous instances requiring no authentication whatsoever—leaving API keys, conversation histories, and in some cases, root shell access available to anyone who stumbles across them.

This isn’t a vulnerability in Clawdbot itself. It’s a predictable pattern that emerges when powerful autonomous systems are deployed without careful security hardening. And given what Clawdbot can access—your messages, your credentials, your filesystem, your browser sessions—the stakes are extraordinarily high.

If you’re running Clawdbot, stop reading and run clawdbot security audit immediately. Then come back.

📚 This article is part of our ongoing series on AI Agent Security Risks. As autonomous AI systems proliferate, we’re documenting the emerging attack surfaces that security teams need to understand. See the complete series at the end of this article.

What is Clawdbot and Why Should You Care?

Clawdbot has exploded onto the scene as an open-source personal AI assistant that runs locally on your own hardware. Created by Peter Steinberger, it connects frontier AI models like Claude to your messaging platforms—WhatsApp, Telegram, Slack, Discord, Signal, iMessage, Microsoft Teams—and gives the AI the ability to actually do things on your behalf.

The appeal is obvious: instead of copy-pasting between apps, you text your AI assistant and it handles everything. It reads your emails, manages your calendar, searches the web, runs shell commands, controls your browser, and maintains long-term memory across sessions. The lobster mascot (🦞) is cute. The capabilities are genuinely impressive.

The appeal is obvious: instead of copy-pasting between apps, you text your AI assistant and it handles everything. It reads your emails, manages your calendar, searches the web, runs shell commands, controls your browser, and maintains long-term memory across sessions. The lobster mascot (🦞) is cute. The capabilities are genuinely impressive.

But here’s what the hype cycle misses: Clawdbot is not a productivity app. It’s infrastructure.

This is part of a broader pattern we’ve been tracking. As we covered in our analysis of agentic desktop agents and local file access security, AI systems that operate on your local machine introduce fundamentally different risk profiles than cloud-based assistants.

Specifically, it’s an agent gateway that bridges large language models with real execution environments. Once deployed, it becomes part of your attack surface. The Gateway listens on port 18789 by default, handles message routing, manages credentials, and executes tools. The Control UI provides a web-based admin interface for configuration, conversation inspection, and key management.

When that infrastructure is exposed without proper protection, you’re not just leaking data—you’re handing over the keys to your digital life.

https://www.youtube.com/results?search_query=clawdbot

The Numbers: What We Found

Using internet scanning platforms, we identified Clawdbot instances across the public internet with concerning results:

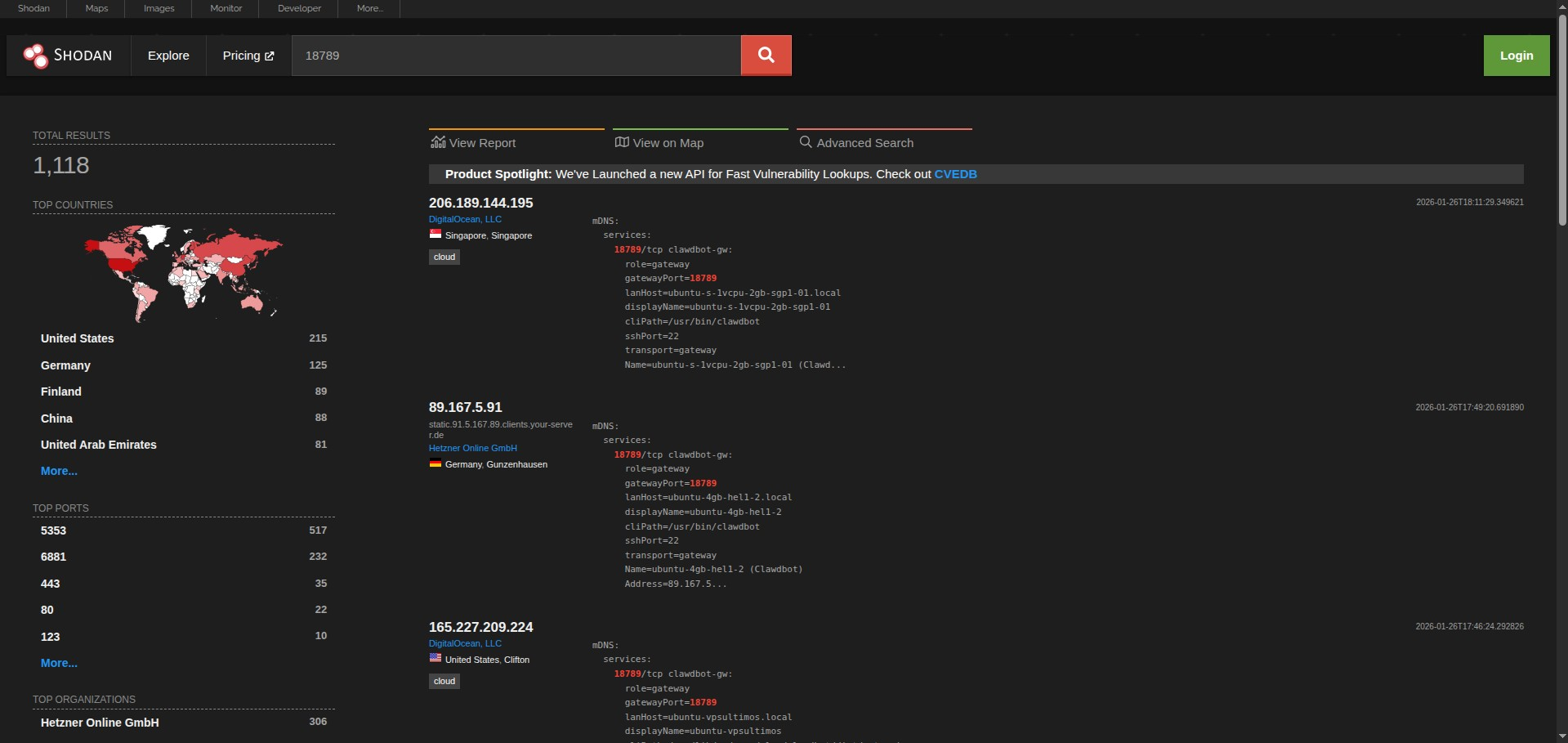

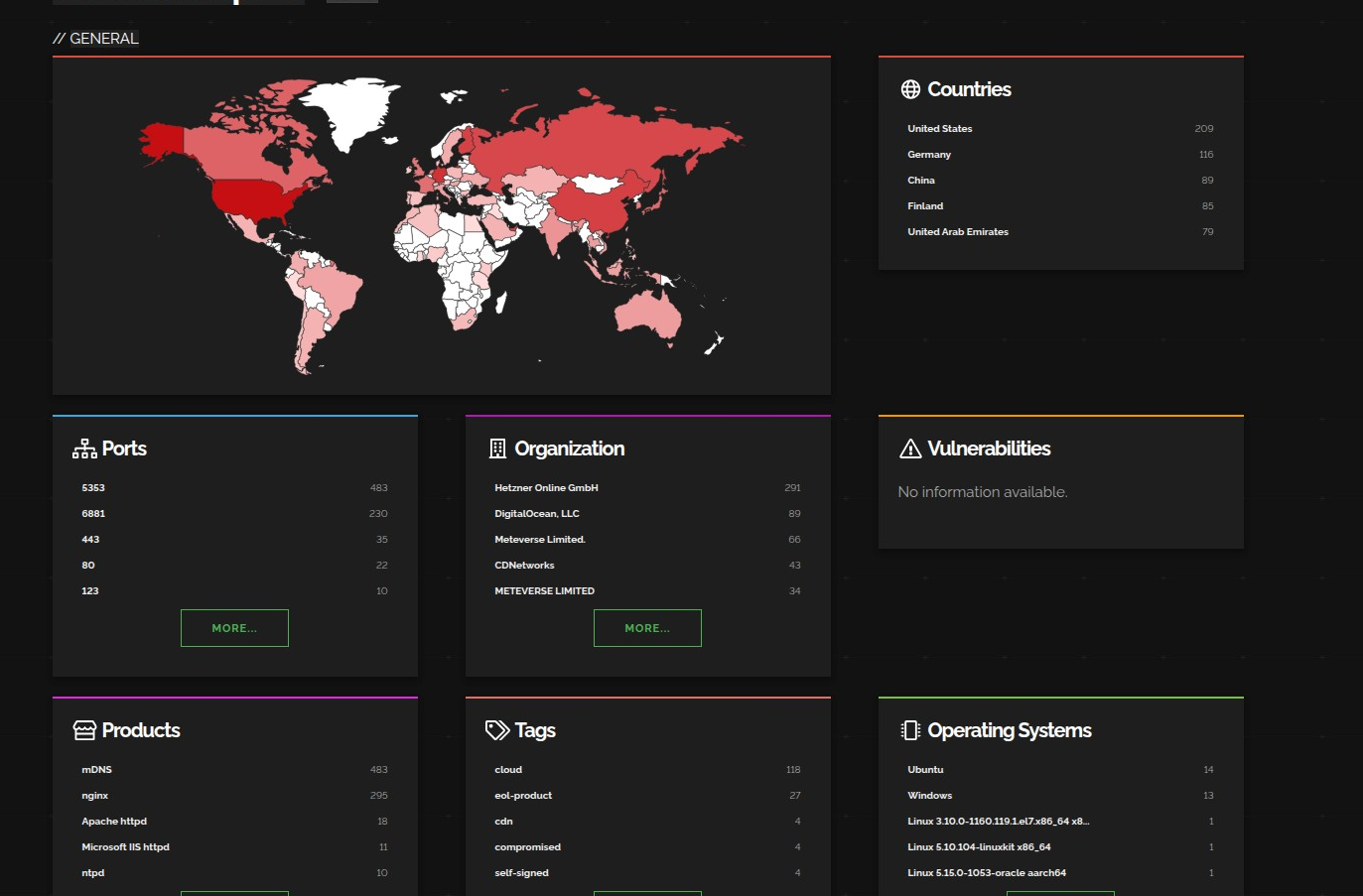

Shodan Results: Port 18789 (Default Gateway Port)

-

Total exposed instances: 1,091 Top countries by exposure:

-

United States: 210

-

Germany: 117

-

China: 89

-

Finland: 86

-

United Arab Emirates: 79

-

Top services detected: mDNS (486), BitTorrent/6881 (230), HTTPS (35), HTTP (22) Top hosting providers:

-

Hetzner Online GmbH: 294

-

DigitalOcean, LLC: 89

-

Meteverse Limited: 66

-

CDNetworks: 43

Shodan Results: clawdbot-gw Service Identifier

- Total identified gateways: 321 Top countries:

- United States: 85

- Germany: 67

- Finland: 54

- United Arab Emirates: 52

- Singapore: 12

Top hosting providers:

- Hetzner Online GmbH: 190

- DigitalOcean, LLC: 58

- RackNerd LLC: 5

- OVH SAS: 4

- HostPapa: 3

Censys Results

- Total indexed hosts: 306

- Geographic distribution mirrors Shodan, with heavy concentration in US (167) and Germany (42)

Combined Analysis

- Total unique deployments identified: 1,000+ across multiple search methods

- Heavy concentration on budget VPS providers (Hetzner, DigitalOcean) suggests hobbyist/developer deployments

- Finland’s disproportionate representation (population ~5.5M) indicates strong Nordic tech community adoption

- Many instances have authentication enabled but remain high-value targets

- A subset required no authentication whatsoever

- Several exposed instances had active integrations to Slack, WhatsApp, Gmail, X (Twitter), Google Calendar, and more

What’s Actually at Risk: The Threat Model

An exposed Clawdbot Control interface isn’t just a configuration leak—it’s a complete compromise of everything the agent can see and do.

Read Access Gets You:

- Complete credential dumps: Anthropic API keys, Telegram bot tokens, Slack OAuth secrets, signing keys

- Full conversation history: Every message the agent has processed, potentially months of private communications

- Integration metadata: What platforms are connected, what permissions are granted, what tools are available

Write/Execute Access Enables:

- Impersonation attacks: Send messages as the operator across any connected platform

- Perception manipulation: Filter, modify, or inject messages before the human sees them

- Data exfiltration: Extract information through legitimate-looking integrations

- Arbitrary command execution: On instances with shell access enabled, run any command on the host system

- Browser session hijacking: For instances with browser control enabled, access logged-in sessions across all websites

The browser control capability is particularly concerning. As we detailed in The Agentic Browser Revolution: A CISO’s Guide to the AI Attack Surface, AI agents with browser automation can inherit all the permissions of your logged-in sessions—banking, email, cloud consoles, everything.

In the worst cases documented during security research, attackers would have access to run commands as root inside containers with no privilege separation.

A Real-World Example: Signal Pairing URI Exposure

One particularly striking finding involved a Signal messenger integration. The researcher discovered a publicly-exposed Clawdbot instance where someone had set up their Signal account—complete with a device pairing URI sitting in a world-readable temp file.

Signal’s vaunted end-to-end encryption becomes meaningless when the pairing credential is exposed. Tap that URI on any phone with Signal installed, and you’re paired to the account with full access.

The owner? An “AI Systems engineer” who presumably understood the technology but missed a critical security step.

Why This Happens: The Reverse Proxy Problem

Many of these exposures stem from a classic infrastructure misconfiguration rather than an exotic vulnerability.

Clawdbot supports cryptographic device authentication with a challenge-response protocol—solid security engineering. The problem is that local connections are auto-approved without authentication by default. This makes sense for local development but creates issues when deployed behind a reverse proxy.

Here’s what happens:

- User deploys Clawdbot behind nginx or Caddy on the same host

- External traffic arrives through the reverse proxy

- Proxy forwards to localhost—so connections appear to originate from 127.0.0.1

- Clawdbot sees a “local” connection and auto-approves without authentication

- Authentication is effectively bypassed for everyone

The gateway.trustedProxies configuration option exists but defaults to empty. When empty, X-Forwarded-For headers are ignored. The gateway just uses the socket address—which behind a reverse proxy is always loopback.

This is Security 101 for web applications, but the impact is amplified because you’re not just exposing a website—you’re exposing an autonomous agent with persistent authority over your digital presence.

Associated Vulnerabilities

Beyond misconfiguration, the broader Clawdbot deployment surface intersects with several CVEs that could compound exposure:

CVE Severity Description

CVE-2025-13836 9.1 (Critical) HTTP Content-Length handling allows reading large amounts of data into memory, potentially causing OOM or DoS

CVE-2025-12084 3.3 (Low) xml.dom.minidom quadratic complexity when building nested documents

CVE-2024-9287 7.8 (High) CPython venv module path handling allows command injection in virtual environment activation scripts

While these aren’t Clawdbot-specific, they affect the underlying runtime environments where Clawdbot is commonly deployed.

The Structural Problem: Why AI Agents Are Hard to Secure

Clawdbot exposes a fundamental tension in AI agent design. For an agent to be useful, it requires capabilities that traditional security models were built to prevent.

This challenge extends beyond Clawdbot to the entire ecosystem of AI agent frameworks. The Model Context Protocol (MCP), which many agents use for tool integration, has its own set of vulnerabilities we documented in AI Agent Security Crisis: MCP Vulnerabilities Exposed.

It needs to read your messages because it can’t respond to communications without seeing them.

It needs to store your credentials because it can’t authenticate to external services without secrets.

It needs command execution because it can’t run tools without shell access.

It needs persistent state because it can’t maintain conversational context without stored data.

Remove any of these capabilities and the agent becomes useless. Keep them and you’ve created a single point of compromise for your entire digital life.

The security models we’ve relied on for decades rest on certain assumptions that AI agents violate by design:

- Application sandboxing: The agent operates outside the sandbox because it needs to

- End-to-end encryption: Terminates at the agent because it needs to read the messages

- Principle of least privilege: The agent’s value proposition is maximum privilege

This isn’t a bug. It’s the architecture. Which means securing these systems requires a fundamentally different approach.

Practical Recommendations

If you’re running Clawdbot (or considering it), treat this as production-grade, high-risk infrastructure. Here’s the minimum viable security posture:

Immediate Actions

Never expose unauthenticated on 0.0.0.0

- Configure

gateway.auth.passwordif exposing beyond localhost - Set

gateway.trustedProxieswhen running behind a reverse proxy

Use Tailscale Serve or Cloudflare Tunnel instead of direct exposure

- Tailscale keeps the Gateway on loopback and handles access control

- Cloudflare Tunnel provides zero-trust access without opening firewall ports

- Both are free for personal use

Run the security audit

clawdbot security audit

clawdbot security audit --deep

clawdbot security audit --fix

Architectural Best Practices

Don’t run on your primary machine

- Use a VM, container, or dedicated device

- Limit blast radius if compromise occurs

Use test accounts where possible

- Don’t connect your primary WhatsApp/Telegram/Signal accounts

- Create dedicated accounts for AI agent interactions

Treat credential stores like secrets management systems

- Because that’s what they functionally are

- Review what’s stored in

~/.clawdbot/credentials/

Recognize conversation history as sensitive data

- Months of context about how you think, what you’re working on, who you communicate with

- That’s intelligence, and it should be protected accordingly

- Audit who can trigger your bot

- Default to

pairingmode and explicitly approve trusted contacts

Understand your DM policies

clawdbot doctor

What This Means for the Industry

Clawdbot isn’t an outlier—it’s an early manifestation of a broader shift toward agent-based computing. These findings aren’t meant to single out one project. The Clawdbot team has solid security documentation and has been responsive to community feedback.

The lesson is that autonomous agents fundamentally change the blast radius of misconfigurations. As they become more capable, the risk they introduce increases proportionally.

This pattern repeats across the AI agent landscape:

- Workflow automation platforms like Zapier, n8n, and Power Automate create similar concentration-of-capability risks, as we explored in The Workflow Automation Blind Spot

- Agent skills and plugins represent the next frontier of attack surface expansion—third-party code running with your agent’s permissions, detailed in our latest piece on Agent Skills: The Next AI Attack Surface

We’re going to see more of this. Every AI agent framework—whether open-source or commercial—will face similar challenges. The economics of adoption will push people toward deployment before security hardening. Internet-wide scanners will find those deployments within hours.

The organizations that adapt their security posture first will have a significant advantage. The ones that don’t will learn the hard way that handing shell access to an AI is “spicy,” as the Clawdbot docs themselves acknowledge.

The robot butler is brilliant. Just make sure the front door is locked.

Related Reading: AI Agent Security Series

This article is part of CISO Marketplace’s ongoing coverage of AI agent security risks. As autonomous systems become mainstream infrastructure, understanding these attack surfaces is critical for security leaders.

The Complete Series:

- Agentic Desktop Agents: AI Local File Access Security How AI assistants running on your local machine create new vectors for data exfiltration and privilege escalation.

- The Agentic Browser Revolution: A CISO’s Guide to the AI Attack Surface Browser automation agents inherit your logged-in sessions. Here’s what that means for enterprise security.

- The Workflow Automation Blind Spot: Zapier, n8n, Power Automate Security No-code automation platforms concentrate credentials and capabilities in ways most security teams aren’t monitoring.

- AI Agent Security Crisis: MCP Vulnerabilities Exposed The Model Context Protocol enables powerful AI integrations—and introduces systemic vulnerabilities across the ecosystem.

- Agent Skills: The Next AI Attack Surface Third-party skills and plugins run with your agent’s permissions. The supply chain implications are significant.

- Clawdbot Exposure: 1,000+ AI Agents on the Public Internet (This article) Real-world deployment analysis reveals widespread misconfiguration of autonomous AI infrastructure.

Subscribe to the CISO Marketplace newsletter for updates as we continue this series.

Resources

- Clawdbot Security Documentation: https://docs.clawd.bot/gateway/security

- Shodan Search (Port 18789): https://www.shodan.io/search?query=18789

- Shodan Search (clawdbot-gw): https://www.shodan.io/search?query=clawdbot-gw

- Tailscale (Free Zero-Trust Access): https://tailscale.com

- Cloudflare Tunnel (Free): https://developers.cloudflare.com/cloudflare-one/connections/connect-apps/

Disclosure

This research was conducted using publicly available internet scanning services. No unauthorized access was attempted. Exposed systems were identified through their distinctive HTTP fingerprints. Individual system operators were not contacted directly, though this article serves as public notification of the exposure risk.

*Have questions about AI agent security or need help with a security assessment? Contact the *CISO Marketplace team.